Building a Minimal Kafka System You Can Actually Debug

Series

Kafka Mastery

2 of 3 in the series

A series about using Kafka in real JVM systems, with each article anchored on a concrete failure mode or design decision.

The smallest useful Kafka setup is a producer, a consumer, and a way to see what is happening. Local visibility tooling is part of the development environment, not an extra.

Producers are easy. The argument for building a minimal Kafka system carefully is not about getting your first event flowing. It is about what happens the first time something breaks.

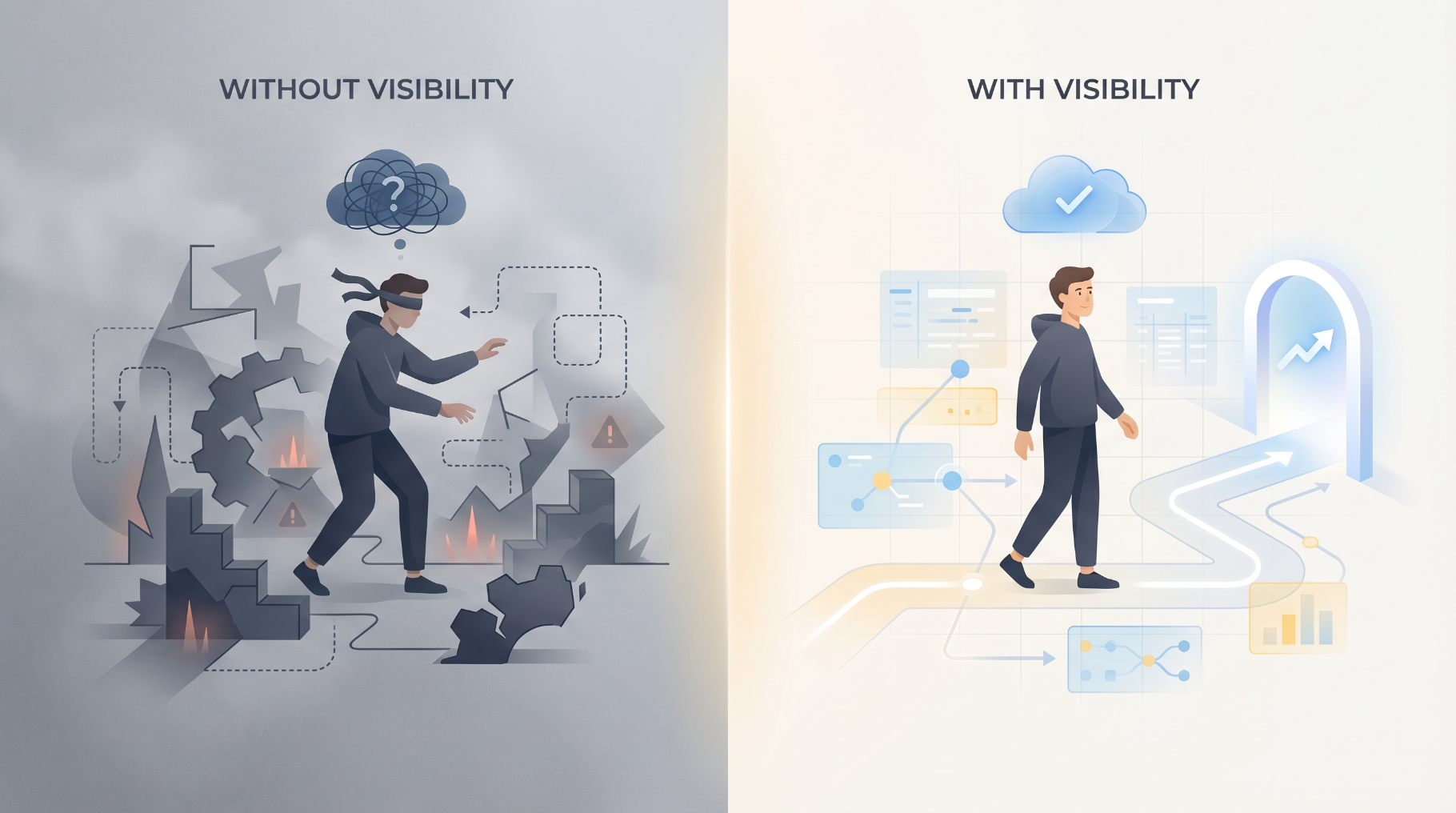

Running Kafka blind, with no visibility into topics, partitions, offsets, or consumer-group state, makes every later bug ten times harder to diagnose. Local tooling is part of the development environment, not an extra.

Why Visibility Matters From Day One

A REST endpoint that misbehaves can be inspected in three seconds with curl or browser devtools. A Kafka producer that misbehaves can run for hours with no errors and no output. The producer thinks it published. The consumer is silent. The bug lives in a topic name, an offset, or a consumer group that no one is looking at.

Without a UI, the loop is:

- Add logging to the producer

- Restart it

- Add logging to the consumer

- Restart it

- Try to remember what the topic name actually is

- Realize the consumer is in a different consumer group than expected

- Restart the world

With a UI, the loop is:

- Open the UI

- Look at the topic

- Look at the consumer-group lag

- See the problem

Tutorials skip this because their examples never break. Real systems break, and they break in places that only a UI makes visible.

What This System Is

A minimal Kafka system that is honest about what it includes and what it leaves for later:

- Apache Kafka 3.9 in KRaft mode (no Zookeeper)

- A single broker, sized for local development

- Kafka UI (the Provectus project) wired in from the first commit

- A Spring Boot producer that publishes

OrderCreatedEvent - A Spring Boot consumer that reads it

- JSON serialization, with a note that this is temporary

- No authentication, with a note that this is temporary

The two notes matter. JSON is contractless and gets replaced later in the series with Avro and Schema Registry. Running without authentication is fine for local development and unsafe everywhere else.

This article uses Java 21, Spring Boot 3.4, Spring Kafka 3.3, and Apache Kafka 3.9 in KRaft mode. Subsequent articles use the same pins.

Docker Compose

KRaft removes Zookeeper from the picture, which removes one container, one set of failure modes, and a long list of configuration that no longer applies. A single-broker local cluster is fine for everything in the early articles.

services:

kafka:

image: confluentinc/cp-kafka:7.8.0

container_name: kafka

ports:

- "9092:9092"

- "9093:9093"

environment:

KAFKA_NODE_ID: 1

KAFKA_PROCESS_ROLES: "broker,controller"

KAFKA_LISTENERS: "INTERNAL://0.0.0.0:29092,EXTERNAL://0.0.0.0:9092,CONTROLLER://0.0.0.0:9093"

KAFKA_ADVERTISED_LISTENERS: "INTERNAL://kafka:29092,EXTERNAL://localhost:9092"

KAFKA_CONTROLLER_LISTENER_NAMES: "CONTROLLER"

KAFKA_CONTROLLER_QUORUM_VOTERS: "1@kafka:9093"

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: "INTERNAL:PLAINTEXT,EXTERNAL:PLAINTEXT,CONTROLLER:PLAINTEXT"

KAFKA_INTER_BROKER_LISTENER_NAME: "INTERNAL"

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

KAFKA_TRANSACTION_STATE_LOG_REPLICATION_FACTOR: 1

KAFKA_TRANSACTION_STATE_LOG_MIN_ISR: 1

CLUSTER_ID: "MkU3OEVBNTcwNTJENDM2Qg"

kafka-ui:

image: provectuslabs/kafka-ui:latest

container_name: kafka-ui

ports:

- "8080:8080"

environment:

KAFKA_CLUSTERS_0_NAME: "local"

KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS: "kafka:29092"

depends_on:

- kafkaTwo listener choices matter, and they are not optional in a Docker setup:

INTERNAL://kafka:29092is what other containers (Kafka UI, any Dockerised consumer) use to reach the broker by service name on the Docker network.EXTERNAL://localhost:9092is what processes on the host (Spring Boot running in your IDE) use to reach the same broker.

Advertising only one listener address is the most common Kafka-on-Docker mistake. The broker hands the advertised address back to clients during metadata lookup, so a single advertised localhost:9092 will tell Kafka UI to dial localhost:9092 from inside its own container, where there is no broker. Two listeners avoid this.

Three local-only overrides also matter:

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR=1because there is only one brokerKAFKA_TRANSACTION_STATE_LOG_REPLICATION_FACTOR=1for the same reasonKAFKA_TRANSACTION_STATE_LOG_MIN_ISR=1so transactional initialization does not fail

Default Kafka 3.9 values for the last two are 3 and 2, which is correct for production and wrong for a single-broker dev box.

The Producer

A small Spring Boot service that exposes a POST endpoint and publishes an event.

public record OrderCreatedEvent(

UUID orderId,

UUID customerId,

long amountCents,

String currency,

Instant createdAt

) {}@Service

public class OrderProducer {

private static final String TOPIC = "order.created";

private final KafkaTemplate<String, OrderCreatedEvent> kafkaTemplate;

public OrderProducer(KafkaTemplate<String, OrderCreatedEvent> kafkaTemplate) {

this.kafkaTemplate = kafkaTemplate;

}

public void publish(OrderCreatedEvent event) {

kafkaTemplate.send(TOPIC, event.orderId().toString(), event);

}

}@RestController

@RequestMapping("/orders")

public class OrderController {

private final OrderProducer orderProducer;

public OrderController(OrderProducer orderProducer) {

this.orderProducer = orderProducer;

}

@PostMapping

public ResponseEntity<UUID> create(@RequestBody CreateOrderRequest request) {

OrderCreatedEvent event = new OrderCreatedEvent(

UUID.randomUUID(),

request.customerId(),

request.amountCents(),

request.currency(),

Instant.now()

);

orderProducer.publish(event);

return ResponseEntity.accepted().body(event.orderId());

}

}The endpoint returns 202 Accepted, not 201 Created. The order has been accepted for processing. It has not been confirmed. That distinction becomes the failure scenario in the next article.

The Consumer

@Component

public class OrderConsumer {

private static final Logger log = LoggerFactory.getLogger(OrderConsumer.class);

@KafkaListener(topics = "order.created", groupId = "order-logger")

public void onOrderCreated(OrderCreatedEvent event) {

log.info("Received order {} for customer {} amount {} {}",

event.orderId(),

event.customerId(),

event.amountCents(),

event.currency());

}

}A consumer that does nothing but log is enough to prove the system works end to end and to anchor the visibility walkthrough below.

Spring Configuration

spring:

kafka:

bootstrap-servers: localhost:9092

producer:

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.springframework.kafka.support.serializer.JsonSerializer

acks: all

consumer:

group-id: order-logger

auto-offset-reset: earliest

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

value-deserializer: org.springframework.kafka.support.serializer.JsonDeserializer

properties:

spring.json.trusted.packages: "com.example.events"acks: all is the only producer reliability setting that belongs in a starter file.

What To Look At In Kafka UI

Open http://localhost:8080. Six things are worth knowing how to find before any real bug happens.

- Topics list. The

order.createdtopic should appear after the first publish. If it is missing, the producer is talking to a different bootstrap address or the topic name is wrong on the producer side. - Topic detail and partitions. Number of partitions, replication factor, retention configuration. A single-broker cluster has replication factor 1 by default for new topics.

- Message browser. Recent messages on a partition, with key, value, headers, and offset. The fastest way to see whether the producer is publishing what the developer thinks it is.

- Consumer groups. The

order-loggergroup, its members, the partitions assigned to each member, and the committed offset per partition. - Consumer-group lag. The difference between the latest offset and the committed offset for the group. Lag is the most important reliability metric in Kafka.

- Broker health. Under-replicated partition count, request latency, controller status. Quiet for now. Loud later if the broker is unhealthy.

If the team can navigate to all six during a normal day, they can investigate most production incidents during a bad one.

The Failure Scenario: One Wrong Character

The producer publishes to order.created. The consumer is configured for order.create (a typo). No errors. The producer call returns 202 Accepted. The consumer logs nothing. The endpoint looks healthy. Lag in the order-logger group is zero, because the group has no assignment to a topic that does not exist.

Without Kafka UI, the natural debugging path is to add logs, redeploy, restart, and stare at code. With Kafka UI:

- Open Topics.

order.createdexists, with messages flowing in. - Open Consumer Groups.

order-loggeris subscribed toorder.create, which has no messages. - The typo is visible in under a minute.

This is not a contrived bug. Topic-name typos, environment-mismatched bootstrap servers, and consumer-group ID drift across deployments produce the same observable behavior. None of them log an error. All of them are obvious in a UI and invisible without one.

Suggested Module Shape

The two services are deliberately separate processes. Producer and consumer in the same JVM is a tutorial pattern that hides every interesting Kafka behavior.

What Most People Get Wrong

Skipping local tooling. The reflex is "I will add a UI later, once I really need it." That sentence is always wrong. The point at which a UI is really needed is the point at which a production incident is already in progress, and the local muscle memory for the UI does not exist.

Kafka UI is a 50 MB container that takes one block of YAML to wire up. It removes an entire category of "I am staring at code that should work" debugging.

What Comes Next

The next article takes this one-producer, one-consumer setup and turns it into a three-service event-driven flow. That is also the first place where a service writes to a database and publishes an event in the same code path, which is the first place the dual-write problem can hide. That article names the problem and seeds it. The fix comes later.