AI Agents Need Architectural Boundaries, Not Just Prompts

Series

Agentic Engineering

1 of 3 in the series

A series on building AI-assisted delivery systems that stay coherent: governed agents, shared product memory, and delivery workflows that reduce drift across teams and platforms.

Most teams approach agentic AI systems the same way they approached early microservices: with optimism about autonomy and insufficient thought about failure modes. The boundary problem is the same. Only the symptoms are different.

Most teams building agentic AI systems right now are making the same mistake they made with early microservices: they are solving the capability problem before they solve the boundary problem.

With microservices, the capability problem was easy. You could decompose a monolith into services quickly. The hard problem (the one that took years to understand) was: where do the boundaries go? What does each service own? What are the failure modes when a service goes down? Who is responsible when data becomes inconsistent across three services that all thought they owned part of it?

The same problem is now repeating with agents.

The Capability Trap

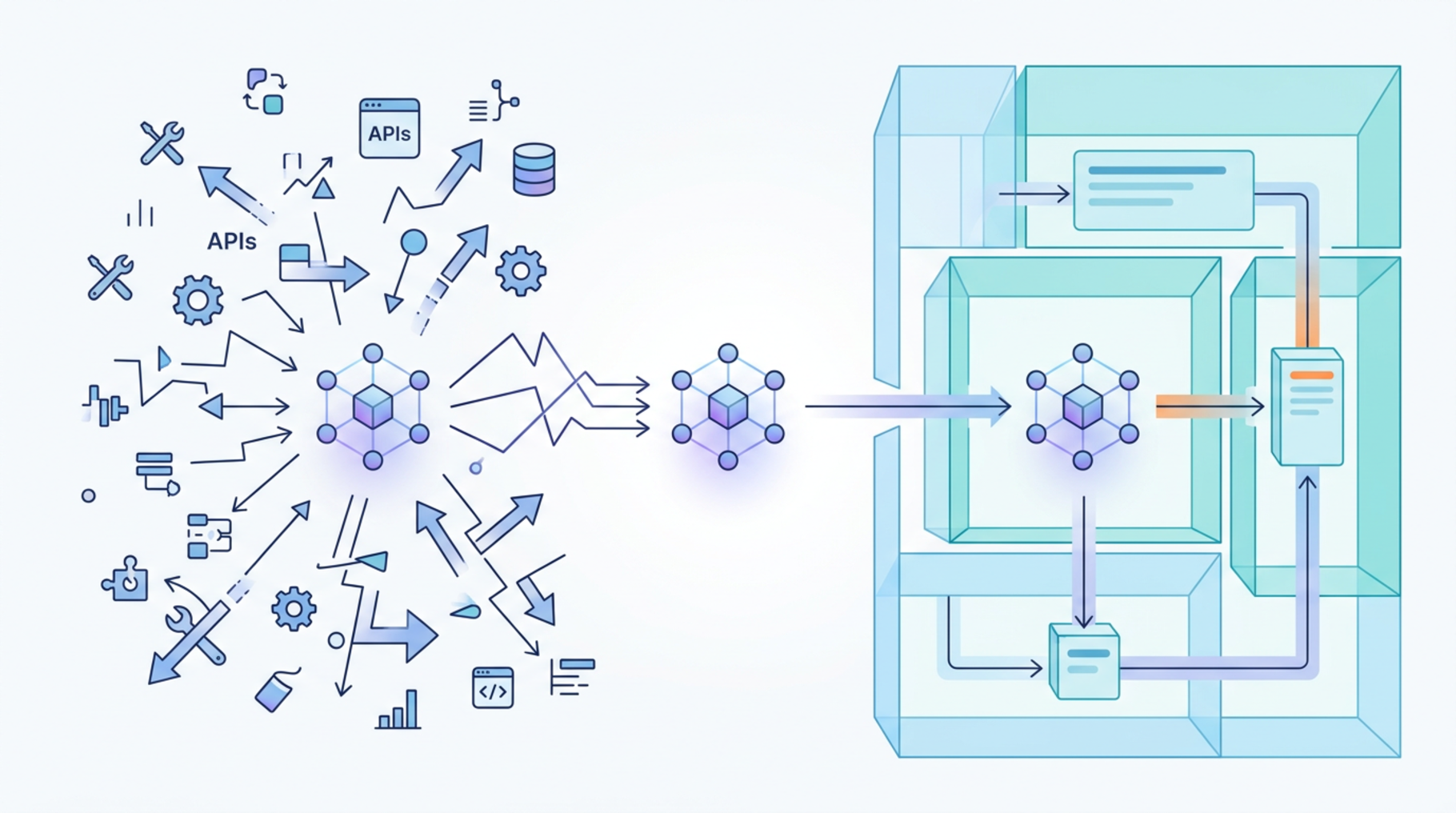

Building an agent that can do something is fast. You give an LLM a set of tools, write a system prompt, and watch it act. The demos are compelling. The agent browses the web, reads documents, calls APIs, generates reports. It looks like automation.

But "can do" and "should do" are different questions. And "should do reliably, with clear failure modes, in a production system that other people depend on" is a third question entirely.

When you ask most teams where the boundary of their agent is, you get one of two answers:

- A list of tools the agent can call

- A description of what the agent is supposed to accomplish

Neither of these is an architectural boundary. A list of tools is a capability set. A goal description is an intent specification. Neither tells you:

- What happens when the agent takes an unexpected path to achieve the goal

- Who is responsible for validating the agent's decisions before they become irreversible

- What the failure mode is when the agent encounters ambiguity

- How you debug what the agent did and why

Why Boundaries Matter More Than Capabilities

An architectural boundary for an agent is a clear definition of:

- What the agent owns exclusively: data, state, or actions that only the agent is responsible for

- What the agent must not touch: systems, data, or decisions that require human confirmation or are owned by other components

- What the contract is with the outside world: what the agent promises to its callers, in terms of outputs, latency, and failure behavior

Without these three definitions, you do not have an agent with a boundary. You have an agent with a prompt. And a prompt is not an architectural guarantee.

A Concrete Example

Consider an agent designed to handle customer support escalations. The prompt says: "Review the customer's issue, check their account history, and either resolve the issue or escalate to a human agent."

The capability boundary seems clear. But what is the architectural boundary?

- Can the agent modify account data directly, or only read it?

- If the agent resolves an issue by issuing a refund, is that action reversible?

- If the account history lookup fails, does the agent fail-closed (escalate everything) or fail-open (attempt resolution without context)?

- What does "escalate to a human agent" mean: a database write, an API call, a queue message? Who owns the failure if that action fails?

These questions are not answered by the prompt. They are answered by the architecture. And if you have not answered them before building the agent, you will answer them the hard way: in production, when something goes wrong.

What Good Boundaries Look Like

A well-bounded agent has:

A clear read/write contract. The agent knows exactly which systems it can read from, which systems it can write to, and which writes are reversible versus permanent. This is not a prompt instruction. It is enforced at the infrastructure level. The agent should not be able to take an irreversible action that the architecture does not explicitly permit.

Explicit uncertainty handling. When the agent encounters a case outside its boundary, it should fail in a defined way. "I cannot handle this reliably" is a valid agent output. It should be designed as a first-class result, not treated as a failure to be avoided by expanding the agent's capabilities.

Observable decision points. Every decision the agent makes that has consequences outside its own state should be logged with enough context to reconstruct the reasoning. This is not logging for debugging convenience. It is logging because agents are not deterministic, and the audit trail is what makes the system trustworthy in regulated or high-stakes domains.

Rate and scope limits at the infrastructure layer. Not in the prompt. An agent told "do not call the payment API more than once per session" will sometimes call it twice. An agent architecturally prevented from calling the payment API more than once per session will not.

The Deeper Point

The reason this matters is not just operational reliability. It is about where trust lives in a system.

A system you can trust is one where you know what each component is responsible for, what happens when it fails, and what the boundaries of its authority are. That is true for services, modules, and libraries. It is also true for agents.

Prompt engineering is a capability tool. Architectural boundaries are a trust tool. Both are necessary. The mistake is believing that the first replaces the need for the second.

Most teams are excellent at the first right now. The teams that will build AI systems that survive contact with production will be the ones who take the second seriously before they need to.