Keeping Spring Boot Delivery Aligned with Product Context and Engineering Standards

Series

Agentic Engineering

3 of 3 in the series

A series on building AI-assisted delivery systems that stay coherent: governed agents, shared product memory, and delivery workflows that reduce drift across teams and platforms.

Backend delivery loses alignment by the third feature when agents work from generic prompts with no shared context. Focused skill decomposition, wiki-driven product context, and explicit delivery commands keep a Spring Boot repo consistent over time.

A lot of agent-driven backend delivery looks good right up until the second or third real feature.

The first prompt generates a controller. The next one creates a service. Then a migration. Then a security rule. Then a test. A few days later, the repo has already started to lose alignment. Controllers do too much. Error handling changes from endpoint to endpoint. Auth and authorization get mixed together. Migrations no longer tell a clean story about how the data model evolved.

That is the part most AI demos skip.

The interesting question is not whether an agent can produce Spring Boot code. It can. The harder question is whether the repo still feels consistent after ten features, three developers, and a few rounds of changing product requirements.

The Real Problem Is Inconsistency, Not Scaffolding

Most teams do not struggle to create the first version of an endpoint. They struggle to keep the next twenty changes aligned with the same architecture, the same business rules, and the same operational expectations.

This inconsistency usually shows up in predictable places:

- route security is present, but ownership and business authorization are inconsistent

- DTO boundaries start clean, then blur over time

- migrations get written as one-off fixes instead of part of a maintained schema history

- outbound integrations grow without clear timeout, retry, or idempotency rules

- logging and metrics become optional instead of expected

- performance review only starts after someone notices the system getting slower

Treating those as design concerns from the beginning, rather than cleanup work for later, is the shift that changes delivery quality.

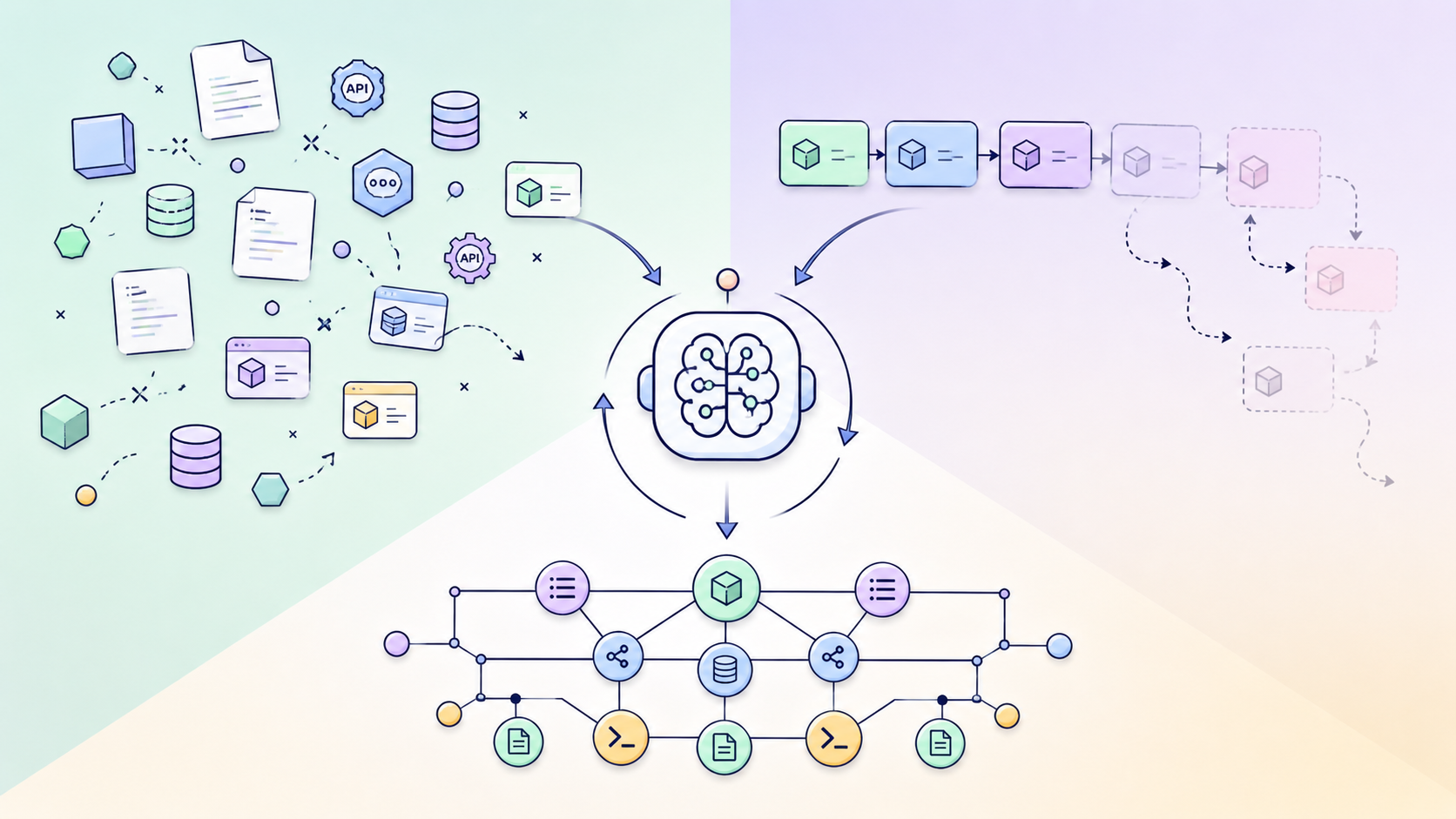

What a Structured Workspace Adds

A backend workspace built around this problem is not only code. It is code plus the structure around how backend work is supposed to happen.

That surface has four parts:

- A shared product wiki in

knowledge/wiki/ - Platform-local agent guidance in files like

backend/CLAUDE.mdandbackend/AGENTS.md - Explicit workflow commands for recurring tasks

- Backend skills that encode specific technical concerns

The important part is how these pieces relate to each other.

The wiki defines what the team is building. Commands define how work moves. Skills define the technical rules that limit implementation. The platform context files keep the local architecture readable inside the repo itself.

That structure matters because it reduces how much context each new session has to rebuild from scratch.

The Backend Skill Surface

The key design choice is to split backend guidance into focused skills instead of one broad "backend expert" prompt.

Foundation Skills

These are the daily guardrails. They shape the default code the agent writes.

spring-boot-conventions: keeps layering, DTO boundaries, and Spring usage consistentsecurity-auth: keeps route exposure, JWT handling, and auth bootstrap rules explicit and separate from business authorizationerror-handling: keeps API failure behavior typed and predictabletesting-patterns: defines what counts as enough proof for backend changesjpa-kotlin-patterns: keeps entity modeling and Kotlin/JPA pitfalls from accumulating silentlymigration-conventions: keeps schema changes part of a readable history instead of a series of emergency fixesjackson-spring-boot4: serialization rules for the Jackson/Spring Boot 4 pairing

Delivery Coordination

This is the part that turns a pile of reference docs into an actual workflow.

backend-feature-delivery: the main delivery skill, keeping backend work contract-first and tying changes back to OpenAPI, DTOs, services, persistence, validation, security, and schema/add-endpoint: start a contract-first endpoint workflow/create-migration: add schema changes with migration discipline/generate-clients: regenerate clients from the shared OpenAPI contract/document-entity: update backend entity docs as the model evolves

backend-feature-delivery is especially important because it prevents the agent from treating each architectural layer as a separate prompt session. The contract comes first.

Production-Focused Skills

These skills address what usually makes backend systems harder to operate and harder to trust.

authorization-rules: business access control beyond route guardsobservability-and-telemetry: what gets logged, measured, and tracedexternal-integrations-and-resilience: timeouts, retries, idempotency, and behavior under partial failureauditing-and-actor-context: how actor context flows through a change and gets preservedperformance-and-query-shaping: fetch strategy, projections, pagination, and N+1 reviewcaching-strategy: cache fit, invalidation approach, and stale-data tradeoffs

Review and Investigation Commands

/review-query: inspect query shape and data access risk/review-security-surface: review exposure, auth, and authorization edges/debug-prod-issue: investigate runtime failures with a repeatable flow

These commands reflect a simple reality: delivery is not only writing new code. A real backend repo also needs repeatable ways to review, inspect, and diagnose behavior.

Manual Endpoint Testing

test-endpoint generates curl commands and Postman request details for exercising endpoints manually. It is a testing aid, not an investigation workflow.

How the Pieces Work Together

The most useful thing about this structure is not any single skill. It is the way the repo teaches the agent to start from the right source of truth.

The backend flow is meant to work like this:

- Read the feature from the wiki

- Read the backend platform requirements

- Use the right command for the task

- Pull in the specialist skills that apply

- Use review or debug commands where the change needs proof

Without that structure, an agent starts from a generic instruction like "add a payout approval endpoint." With this structure, the same task goes through a flow that asks much better questions:

- what changed in the contract

- what route security is needed

- what business authorization is required

- whether schema changes are involved

- what should be logged or measured

- what failure modes exist if an integration is involved

- whether query shape and data access need review

That is a more reliable way to build backend features because it reduces hidden variation.

A Concrete Example

Take a feature like adding a payout approval endpoint.

In a typical prompt-driven setup, an agent may generate the controller, service method, DTOs, and maybe a test. Whether it also handles ownership rules, event attribution, auditability, and operational visibility depends on how much context the prompt happened to carry.

With this structure, the intended path is narrower and more explicit:

/add-endpointstarts the execution flowbackend-feature-deliverykeeps the change aligned with the contract and service boundarysecurity-authhandles route-level accessauthorization-ruleshandles approval permissions and ownership logicerror-handlingkeeps responses typedmigration-conventionsapplies if schema changes are neededauditing-and-actor-contextkeeps user and action context visible in the modelobservability-and-telemetrymakes the approval path easier to diagnose/review-security-surfaceor/review-querycan inspect the result afterward

This is not about making the agent slower. It is about making the repo easier to trust.

Why This Matters to Different People

For Tech Leads

This reduces architectural inconsistency.

The repo starts to carry more of its own engineering policy. You do not need to restate the same backend expectations in every prompt or every PR comment. Review surfaces become clearer. Design intent stays closer to the codebase.

For Product Managers

This reduces handoff inconsistency.

The backend is tied to the same wiki and feature state the rest of the team is using. That makes it less likely that implementation differs from what was actually agreed. It also makes product ambiguity easier to spot before code hardens around it.

For Senior Backend Developers

This is where the structure either earns trust or it does not.

The value is not "AI that knows Spring Boot." The value is that it separates concerns the way a mature backend usually needs them separated. Auth is not the same as business authorization. Operational visibility is not an afterthought. Query shaping, integration resilience, and audit context each have a dedicated skill rather than being folded into generic backend guidance.

That is the difference between a repo that generates code and a repo that helps maintain standards.

Backend Skill Structure

The directory tree shows the intent more clearly than the skill names alone do.

The wiki defines what to build. Commands define how work moves. Skills define the backend rules. Platform docs anchor the local implementation model.

What I Think Is Actually Valuable Here

The useful idea here is not "AI for Spring Boot." That framing is too broad to say anything meaningful.

The useful idea is narrower: the workspace gives backend teams a reusable way to keep product context, workflow structure, and technical judgment aligned in one place.

That reduces cognitive load. It makes review easier. It makes the codebase more teachable. It gives the agent fewer chances to improvise in the wrong direction.

It also gives the human team a better place to disagree. Not in the middle of generated code after the fact, but in the wiki, in the skill boundaries, and in the workflow itself.

That is a much healthier place for backend standards to live.

What This Looks Like Under Real Pressure

A CRUD demo does not expose the important things. A finance-oriented backend does.

It forces the repo to answer harder questions:

- who can approve what

- how audit semantics should work

- what a safe refresh-token flow looks like

- what needs to happen when an external provider retries a callback

- how a migration should evolve without creating avoidable operational risk

- what should be logged, measured, or reviewed when money movement is involved

That is exactly the environment where backend skills either carry weight or they do not.

This is the model Prism implements. TreasuryFlow is a working Spring Boot backend built on it, a codebase where these questions are not hypothetical.

Closing

What makes this approach convincing is the one thing most scaffolding skips: it keeps the repo consistent after the first feature is no longer the interesting part.